AI Portfolio Management: The “Ghost Portfolio” Risk in Data & AI Initiatives

At some point in the last eighteen months, you probably ran a meeting to get visibility on what your organization is actually doing in AI. Someone pulled a deck together. A few team leads sent updates. And by the end of it, the original question was still not answered.

The uncomfortable part is not that the answer was incomplete. The uncomfortable part is that nobody in the room was surprised.

Most data leaders in 2026 are not struggling because they lack AI activity. They are struggling because they have too much of it, tracked in too many places, owned by too many teams, and connected to business outcomes by almost nobody. What is missing is the one thing that makes all of it defensible: a clear, shared view of what the AI portfolio is actually worth.

There is a name for what accumulates in that absence. The ghost portfolio. Technically present. Practically invisible. And quietly undermining every strategic conversation the CDO needs to win.

A Visibility & Prioritization Problem, Not a Governance Failure

Most CDOs reading this already have governance in place. The AI board exists. The ownership model is documented. The policies are written, reviewed, and signed off by people who absolutely meant them. According to Gartner, 55% of organizations have an AI board and 54% have a dedicated AI leader. The structures are real.

And yet, when someone from the executive committee asks which AI initiatives are delivering and which should stop, the honest answer takes days to assemble.

That gap has a name. Call it the ghost portfolio: every initiative technically accounted for somewhere, none of it visible from where decisions are actually made. This is the AI governance vs execution divide at its most concrete. Gartner also found that only 25% of organizations clearly define accountability for specific AI initiatives. Governance and AI initiatives visibility turn out to be two separate problems. Spread across an average of three business functions per organization, each with its own backlog and its own informal memory, AI portfolio visibility quietly breaks down.

Not through negligence. Through growth.

Four Failure Modes That Hide in Plain Sight

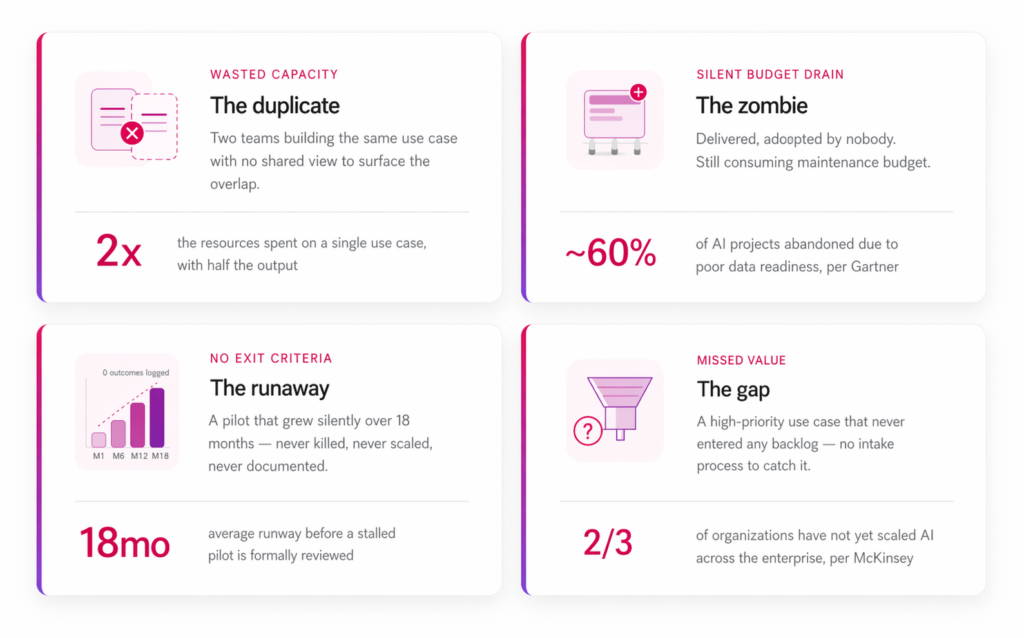

The ghost portfolio is not empty. That is what makes it dangerous. It is full of initiatives, some delivering, some stalled, some long dead, and the organization has only partial sight of which is which. Partial visibility feels manageable. It is precisely the condition under which four failure modes thrive.

The Duplicate. Two teams, sometimes two geographies, building the same AI use case without knowing it. No single view exists to make the overlap visible before resources are committed.

The Zombie. A delivered product, adopted by nobody, still consuming maintenance budget. Gartner estimates up to 60% of AI projects may be abandoned due to poor data readiness. Few organizations know which of their own products are already dead.

The Runaway. A pilot that expanded quietly over eighteen months and never once documented a business goal achieved. Not killed. Not scaled. Just running.

The Gap. A high-priority use case that never made it into any backlog because no formal demand intake process exists. McKinsey found that nearly two-thirds of organizations have not yet scaled AI across the enterprise. The Gap is a significant reason why.

None of these signal dysfunction. They signal that AI project failures and AI use case duplication accumulate naturally in any organization where portfolio visibility is partial. Which is most of them.

Why Poor AI Portfolio Management Becomes a Strategic Risk

The ghost portfolio is an operational problem until it isn’t. At some point, invisibility stops being a management inconvenience and becomes an AI strategy risk with three very different faces.

Budget

When a funding review arrives and the CDO cannot clearly articulate what is running and what it is delivering, the conversation stops being about data and AI. It becomes about whether the function can be trusted with capital. AI ROI challenges are not resolved by better technology. They are resolved by better evidence.

Compliance

The EU AI Act’s full enforcement for high-risk systems takes effect in August 2026. Its foundational requirement is not a policy or a committee. It is an inventory: every AI system classified by risk level, documented, traceable to the data it consumes. Over half of organizations currently lack that. The regulation does not ask whether the organization intended to comply. It asks whether the systems are documented. That is an AI compliance risk no governance policy can retroactively fix.

Organizational trust

Business stakeholders do not lose confidence in data and AI overnight. They lose it across a series of conversations where the answers were incomplete, the outcomes unclear, and the AI investment risk felt higher than the return justified. That erosion is quiet. And very hard to reverse.

The ghost portfolio does not stay in the backroom. It walks into every strategic conversation the CDO needs to win.

What Effective Data & AI Portfolio Management Actually Requires

Most organizations are not starting from zero. They have Jira, ServiceNow, or a PPM platform tracking delivery. They have governance councils producing policies. The problem is that none of these tools were built for what data and AI portfolio management actually requires. They track software delivery. They do not handle AI model lifecycles, regulatory risk classification, or value realization against business outcomes. DataGalaxy Portfolio is designed to connect to them, adding the portfolio governance layer those tools were never built to provide.

The discipline gap is harder to close than the tooling gap. End-to-end AI lifecycle management requires sustained coordination across business, data, risk, and technology teams through the full arc from demand intake to value measurement. Most organizations manage fragments of that arc. The result is four capability gaps:

- A shared inventory of all AI initiatives, visible across teams and business units

- A demand qualification workflow that filters and prioritizes before resources are committed

- A product lifecycle framework covering the full arc from ideation to retirement

- A value and risk layer connecting every product to a measurable business outcome

These four capabilities are not a fix for the ghost portfolio. They are the operating layer that prevents it from forming in the first place.

How Roche Got Visibility Across 300 AI Initiatives

Roche faced this exact situation. More than 300 data and AI initiatives spread across geographies and teams. Strong ambition, real investment, but fragmented AI initiatives visibility and no clear line between initiatives and strategic outcomes. The approach they took was built on three moves: consolidating all initiatives into a single portfolio, establishing value lineage from use cases through to KPIs and strategic objectives, and introducing a consistent valorization framework to prioritize based on business value. The result was a 100% increase in portfolio visibility, 300+ use cases centralized in one environment, and value-based decision making accelerated across the organization. The takeaway Roche chose to lead with at the 2026 Gartner Data and Analytics Summit captures it precisely: “you can’t scale enterprise AI strategy without visibility and value alignment.” Read the full Roche story.

An 8-Step Framework to Put AI Portfolio Management Into Practice

The methodology behind this exists as a practical guide. Eight steps, each building on the last, designed to take an organization from scattered initiatives to a portfolio that is visible, governed, and connected to business outcomes. None of them require overhauling what already exists.

- Inventory and AI demand management: start by capturing what is actually running, then build the AI use case prioritization framework that controls what enters the portfolio next

- Value mapping and data and AI mapping: connect every product to an explicit business goal, and identify the assets required to deliver it

- Product lifecycle and risk management: track products across their full arc and build compliance assessment into the workflow from day one, not as an afterthought

- AI value tracking and operational tracking: measure how each product contributes to business outcomes over time, and connect portfolio metrics to the delivery tools already in use

Each step is achievable at whatever maturity level the organization is starting from. Together they form the AI portfolio management framework that most organizations have been assembling in fragments, without ever completing.

Get the full guide

Download the 8-step guide to building a systematic data and AI portfolio.

Get Your Copy

Conclusion

The ghost portfolio compounds quietly until it becomes a strategic problem. By then, the budget conversations are harder, the compliance exposure is real, and the business stakeholders who once championed data and AI are asking sharper questions than before.

The organizations making enterprise AI strategy work are those who can answer three questions with confidence at any point: what is running, what is it delivering, and what should stop. Governance frameworks on paper do not produce that clarity. Portfolio discipline in practice does.

The starting point is smaller than most CDOs expect. One honest inventory. One qualification process. One value conversation grounded in evidence. Not a program. A decision.

Once the portfolio is visible, every decision that follows becomes faster, cheaper, and easier to defend. Not an operational upgrade. A strategic advantage. And it starts with eight steps.

Q&A

What is a ghost portfolio in AI?

A ghost portfolio is a collection of data and AI initiatives that are technically tracked somewhere in the organization but practically invisible from where decisions are made. Every initiative exists in a backlog, a spreadsheet, or a team lead’s memory. None of it adds up to a coherent, shared view of what is running, what it is delivering, and what should stop.

Why do AI initiatives fail to deliver value?

Four failure modes account for most cases: the Duplicate, where two teams build the same use case without knowing it; the Zombie, a delivered product nobody adopted; the Runaway, a pilot that expanded without ever documenting a business goal; and the Gap, a priority use case that never entered a formal backlog. None of these signal organizational dysfunction. They signal that partial portfolio visibility is sufficient for failure to accumulate.

What is AI portfolio management?

AI portfolio management is the organizational discipline of maintaining a single, governed view of all data and AI initiatives across their full lifecycle. It covers four capabilities: a shared inventory, a demand qualification process, a product lifecycle framework, and a value and risk layer. Distinct from AI governance and project management, it is the operating layer that connects both.

What is the difference between AI governance and AI portfolio visibility?

AI governance defines the policies and accountability structures that determine how AI should be managed. AI portfolio visibility is the operational ability to see, at any point, what initiatives are running, what they are delivering, and how they connect to business outcomes. According to Gartner, 55% of organizations have an AI board but only 25% have clearly defined accountability for specific AI initiatives.

Why is AI portfolio visibility a strategic risk?

Three reasons. Budget: without clear evidence of what is running and delivering, funding conversations shift from data and AI to whether the function can be trusted with capital. Compliance: the EU AI Act requires a documented, classified inventory of every AI system. Organizational trust: incomplete answers erode stakeholder confidence quietly and permanently.